Learning to control and embody robot arms in space

Mechanical and aerospace engineering (MAE) professor Steve Robinson, assistant professor Jonathon Schofield, and Neurobiology, Physiology and Behavior (NPB) associate professor Wilsaan Joiner are teaming up in a new four-year, $1.3 million NASA-funded project to study different visual and haptic strategies to help astronauts more safely and precisely operate robotic arms in space. The project is part of the new UC Davis Center for Spaceflight Research.

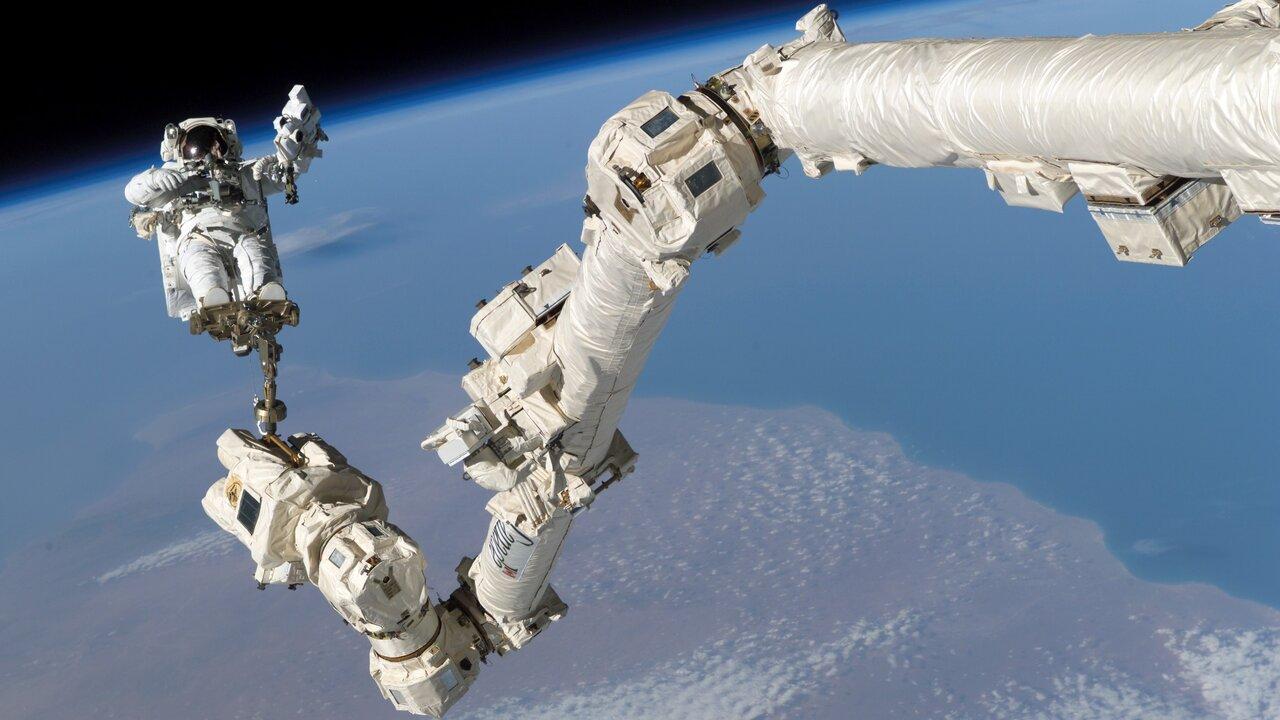

In space, robotic arms are used for everything from assembling large spacecraft to operating un-crewed stations, and Robinson also thinks they will one day be used to mine water ice on the moon. In these hazardous environments, precision equals safety, and collisions can have serious consequences, so the better astronauts can control these robots, the safer they will be. The team thinks that they key to safety is tool embodiment.

“Tool embodiment occurs when an expert tennis player operates their racket,” explained Schofield. “They’re very aware of where it is in space and how to manipulate it, and it functions as an extension of their body.”

Tool-embodiment makes it easier for humans to control these devices precisely, and therefore safely. The team will be developing stimulators on an astronaut’s arm that vibrate in coordination with the external robot arm. That way, an astronaut would move their real arm and see the robot arm moving along with them, as well as feel a type of vibration when the robot arm grabs hold of an object. Based off a person’s responses, researchers can tune the devices to get the user to embody the robot.

“There’s a tremendous amount of information that you can encode in vibration,” said Schofield. “For example, you can probably distinguish between five or six different apps sending you a notification, just by the way your phone pulses and vibrates.”

Robinson and Schofield are most excited about the multidisciplinary nature of the project, which will give graduate and undergraduate students from different backgrounds an opportunity to contribute.

“This project ties together three labs and touches spacecraft, robotic operations, human sensation and perception, human learning and training, so there’s all sorts of natural points of entry for students to get involved,” said Schofield.

The project will culminate in a test of these techniques with crews at NASA’s Human Exploration Research Analog (HERA) facility, a spacecraft analog used to study human subjects in a long-duration isolation environment that’s similar to what an astronaut would experience. Though the research program is sponsored by NASA, Robinson sees this technology being applicable to any number of situations where humans are operating robotic arms.

“It’s not just space robotics,” said Robinson. “It could be used for something like robotic surgery—anywhere where getting it wrong could be very critical.”